@sckottie @rdmpage @christgendreau Three small, early adopting projects should show expected network of synergies if JSON-LD actually useful

— David Shorthouse (@dpsSpiders) February 1, 2016

In a recent Twitter conversation including David Shorthous and myself (and other poor souls who got dragged in) we discussed how to demonstrate that adopting JSON-LD as a simple linked-data friendly format might help bootstrap the long awaited "biodiversity knowledge graph" (see below for some suggestions for keeping JSON-LD simple). David suggests partnering with "Three small, early adopting projects". I disagree.

I think we need to approach this problem, not from the perspective of who would like to try this approach, but what would it take to really be useful? I think if we look at the structure of the biodiversity knowledge graph and look at who has what identifiers, we can gain some insight into what the next steps are.

Sinks

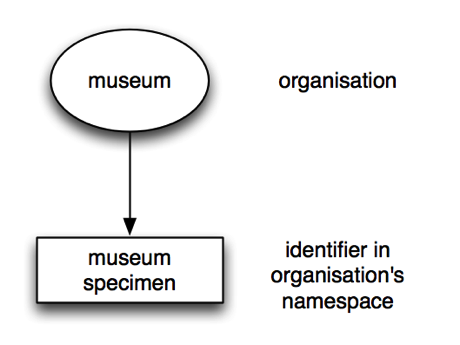

A fundamental problem is that many data providers are essentially dead ends in terms of building a network. They have data, but no connections, and so they are "sinks". A data browser goes there and can't go any further, it has to retrace its steps and go somewhere else. For example, your typical museum might serve up its collection data like this:

The museum has its own identifiers (e.g., a URL) and that's the only identifier in the data. Nomenclators are much the same:

The museum has its own identifiers (e.g., a URL) and that's the only identifier in the data. Nomenclators are much the same:

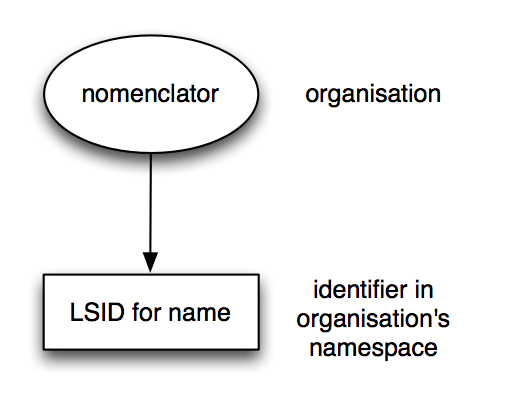

You get a nomenclator-specific identifier such as an LSID, but no other identifiers. Once again, if you are a data-crawler traversing the web of data, this is a dead end. (This is why I've become obsessed with linking nomenclators to the primary literature, I want to stop them being dead ends).

You get a nomenclator-specific identifier such as an LSID, but no other identifiers. Once again, if you are a data-crawler traversing the web of data, this is a dead end. (This is why I've become obsessed with linking nomenclators to the primary literature, I want to stop them being dead ends).

Connected sources

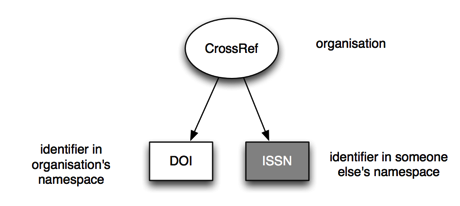

Then there are sources which have at least one external identifier, that is, an identifier that they themselves don't control. For example, CrossRef manages DOIs for articles, but if you get metadata for a DOI you also get an ISSN (identifying a journal): Now we have a connection to an external source of data, and some we can traverse the data graph further, which means we can start asking questions (such as how many articles in this journal have DOIs?).

Now we have a connection to an external source of data, and some we can traverse the data graph further, which means we can start asking questions (such as how many articles in this journal have DOIs?).

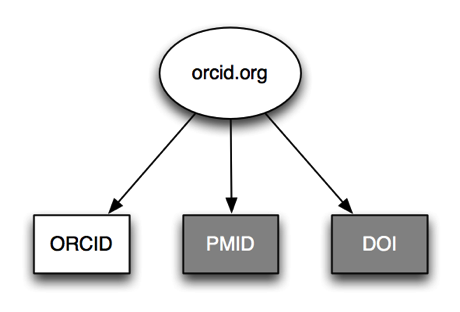

Another connected source is ORCID:

This gives us identifiers for authors (the ORCID) linked to article identifiers such as DOIs and PubMed ids (PMID). Follow the DOI to CrossRef and we can link people to articles to journals.

This gives us identifiers for authors (the ORCID) linked to article identifiers such as DOIs and PubMed ids (PMID). Follow the DOI to CrossRef and we can link people to articles to journals.

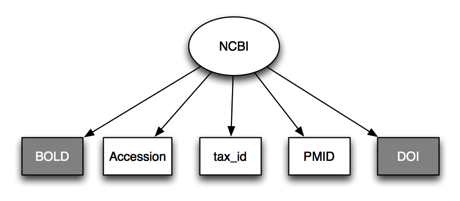

Another connected source is the NCBI:

NCBI has several internal identifiers (PMIDs, GenBank accession numbers, tax_ids) all of which lead to rich resources, and it's possible to get a lot of information by staying within NCBI's own silo, but there are external links such as DOIs (usually found attached to PubMed articles or to GenBank accessions) and links to external records such as DNA barcodes in BOLD.

NCBI has several internal identifiers (PMIDs, GenBank accession numbers, tax_ids) all of which lead to rich resources, and it's possible to get a lot of information by staying within NCBI's own silo, but there are external links such as DOIs (usually found attached to PubMed articles or to GenBank accessions) and links to external records such as DNA barcodes in BOLD.

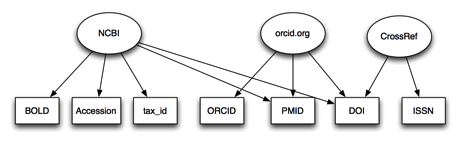

Lets join CrossRef, ORCID, and NCBI together:

Now we have a bipartite graph linking sources with identifiers. We can imagine playing a game where we try and connect different entities by moving through the graph. For example, we could take an author identified by an ORCID, follow a DOI to a PMID, a PMID to a set of accessions numbers, and then be able to list all the sequences that an author has published. We could also step back and ask questions about which identifiers are the most useful in terms of making connections between different sources, and which sources provide the most cross links.

Now we have a bipartite graph linking sources with identifiers. We can imagine playing a game where we try and connect different entities by moving through the graph. For example, we could take an author identified by an ORCID, follow a DOI to a PMID, a PMID to a set of accessions numbers, and then be able to list all the sequences that an author has published. We could also step back and ask questions about which identifiers are the most useful in terms of making connections between different sources, and which sources provide the most cross links.

Filling in the gaps

Now, the graph above is obviously incomplete. I've restricted it to a few of the key services that I'm familiar with, and make use of external identifiers. One of the big obstacles to fleshing out the biodiversity knowledge graph is the frequent lack of reuse of identifiers. It's not enough to pump out data in linked-data form, you need to build the links. And not just "same as" style links connecting multiple identifiers for the same thing, you need to connect identifiers for different things. Until we tackle that, linked data approaches will not deliver much in the way of value. Hence we need sources that provide genuinely linked data by reusing exiting, external identifiers. If we have name in a nomenclator with a citation string, it needs to link that dumb literature string to a DOI. If we have a taxon concept, it needs to link the name of that taxon to a name in a nomenclator. If we have a specimen that has been sequenced, it needs to give the accession number of that sequence.From this perspective, choosing which kinds of data with which to explore JSON-LD and a linked data graph should be driven by how connected those sources are: the more connected the more interesting the questions that we can ask. Sadly the vast majority of biodiversity data providers don't provide the kind of connected data we need, which means we continually pay lip service to linked data without feeding it the kind of data it needs in order to grow.

* Notes on JSON-LD

It is relatively easy to write horrible JSON-LD, so I think it would be useful to strive to make it as simple and as human-readable as possible (ignoring the @context block which is always going to be awful, this is the price we pay for simplicity elsewhere). To this end I think we should do at least the following:- No URLs as identifiers URLs are ugly, and they are not reliable as identifiers. Providers can change URLs, even for persistent identifiers. The DOI prefix has changed from http://dx.doi.org/ to the preferred http://doi.org/, and the rise of HTTPS (prompted by concerns about security, among other issues, see Google's motives, part 2) is going to break a lot of older URLs (see Web Security - "HTTPS Everywhere" harmful). These changes keep happening, so lets try and shield ourselves from these by using standard prefixes for identifiers (such as 'DOI') for a DOI. Put all the alternative URL resolver prefixes in the @context block and use prefixes such as those standardised by http://identifiers.org (which basically formalises what many people in bioinformatics have been doing for a while).

- No prefixes for keys In other words, no CURIEs. Don't write "dwc:CatalogueNumber", just write "CatalogueNumber". I don't want to be told where the term comes from (that's what @context is for). If you have a namespace clash (i.e., two terms that are the same unless you include the namespace) then IMHO you're doing it wrong. Either you're using more than one vocabulary for the same thing (why?), or you've not modelling the data at the appropriate level of granularity. Either way, let's avoid clutter and keep things simple and readable.